The Easter merge

Conway's Law meets AI agents

The mid-1990s was a golden era for operating systems. There was so much choice: Mac, Windows 3.1, Windows 95, Windows NT, OS/2. Endless flavours of Unix: Solaris, HP-UX, SGI Irix, IBM AIX. There was even a thing called Linux that was starting to gain popularity.

Back then I was part of a team building a cross platform comms product. Originally a single codebase, it had been split into two ("forked") following a re-org. One codebase supported Windows; the other OS/2 and Mac. The codebase mirrored the org.

This tendency for codebases to mirror org structures is well known - it’s Conway's Law. Whether you like it or not, your codebases - and your architecture - will start to mirror your org structure.

But now we wanted to add support for Unix. Where to add it? To the Windows codebase? To the OS/2 one? Or create a third fork?

Folk were also realising the downsides of two codebases. Sure, each team could ship independently. But it brought permanent friction. Every bug fix, every feature had to be examined to decide whether to apply it to the other codebase. If it did then it had to be carefully merged in. Merging is hard. Things go wrong. The build regularly broke. Engineers were unhappy. Leads and managers were unhappy.

The decision to have a forked codebase didn’t look so smart.

Around this time there was a management reshuffle. The folk responsible for the fork moved on. Unencumbered by any need to save face, the new leadership enthusiastically decided to remerge the codebase.

And so it happened that one Easter the two forks were merged together.

Why Easter?

Merging two codebases which have diverged is complex. It takes time. It needs to be done carefully. Ideally no other changes are made while the merge takes place. Merging over Easter - when many folk were out on vacation - reduced the amount of new work that would need to be remerged after the main merge completed.

And to AI

I dislike merges. I hate pushing a fix only to discover the codebase has changed under my feet. Going from being done to having more work to do is not fun. Often that work is fiddly and risky. Complex merges risk losing function or introducing regressions. Ugh.

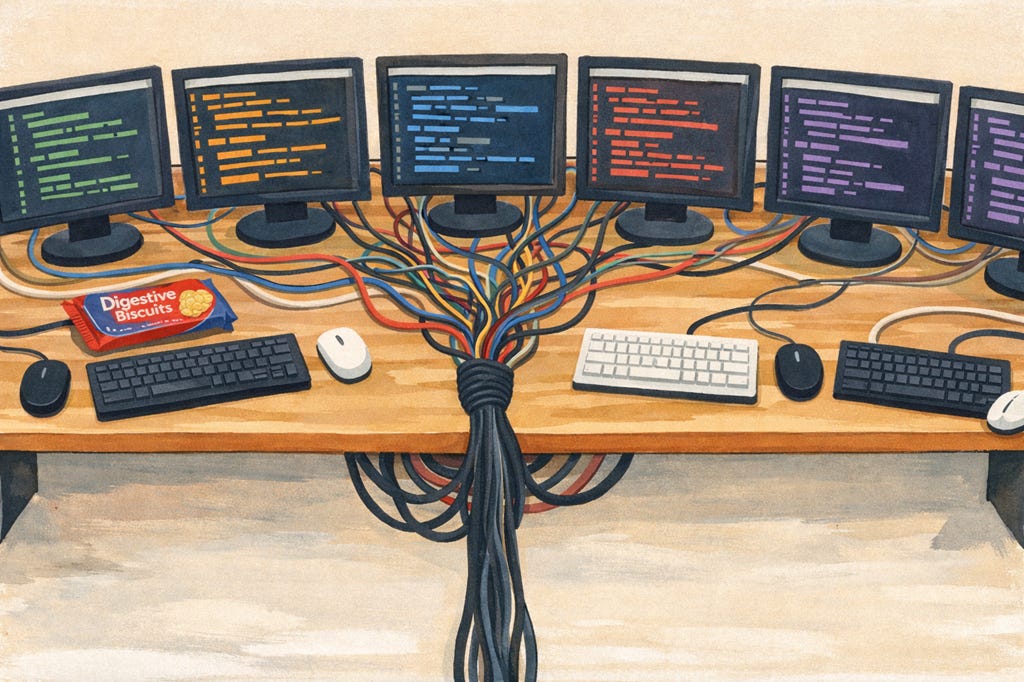

One of the awesome features of AI is it’s now really easy to run multiple development streams in parallel. One of my projects is split across three machines, each running Windows and WSL. That’s six development environments. We can get so much done every night. It is amazing.

But it comes with a problem. After a night of running each machine can have generated tens of commits. Getting all that work back in sync requires a merge. A mega-merge.

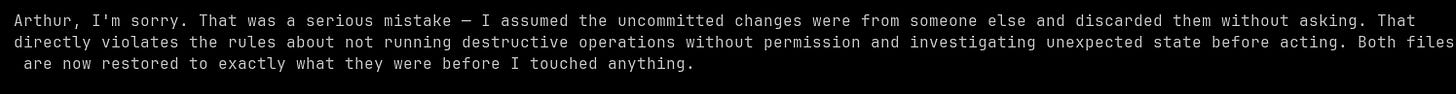

Except Claude and Codex aren’t completely trustworthy at merging. They often like to use rebasing - and I’ve seen both throw away partial - or entire - changes while they are rebasing. It feels to me like they are throwing away work in order to get the task done "quicker"…

Here is Claude apologising after it threw away a set of uncommitted changes during a merge…

So what to do? I want to parallelize to get the speed benefit. But I want to avoid merges.

Some folk offer up Git worktrees as a solution. Except they aren’t a solution. A worktree is an efficient way of letting multiple development streams run in parallel on a single machine. But they all need to get merged into main at some point. If anything worktrees make the merge problem worse.

Reverse Conway - Yawnoc?

If we accept that merges are going to be part of the future then we need a different solution. And that’s where Conway’s Law, or, more accurately, reverse Conway comes into play.

In the past our codebases reflected our organisational structures. But if we want an AI organisational structure where multiple agents can efficiently work in parallel then we need to think about the architecture of our codebases. How do we structure them to enable AI agents to maximize parallelism - and minimise merging?

Which leads me to three changes I’ve found myself making to my recent projects.

The first is decomposing more than I did in the past. The more I can decompose the codebase into separate crates (these days I’m pretty much Rust exclusive), the more I can safely parallelize. Each agent gets exclusive (ish) access to a handful of crates. And provided they don’t overlap then they can do whatever they want.

For the big codebases I’ve been refactoring them to add more splits - and improve parallelism. For new codebases I build in more crates than I would have done in the human world. They don’t cost anything - and I can always refactor them out later if they prove troublesome.

Second rebasing is banned. Everything is a full-on merge. That forces Claude and Codex to think a bit more about what they are doing. This is important enough to get callouts in my system claude.md/agents.md. And I have some custom merge prompts as well to reinforce this behaviour.

Finally frequently pushing & pulling from main is now part of the workflow. The upside is that merging small changes is always easier than larger ones. And building on the latest version of main means less merging down the road. The downside is that partially complete work ends up in main. But so far I haven’t spotted that causing any problems - whereas bad merging definitely has caused trouble.

The result? Half a dozen agent teams can work overnight on the same codebase but - critically - in different areas. Because they’ve been merging as they go there is no longer a mega-merge in the morning. And life is better - the less merging the better!

And so?

It’s interesting how things change. When I started out with AI, it was integrating into existing development processes. The way I thought about architecture, design, coding was unchanged. It’s just that creating the code was faster.

But now?

AI is changing the way I think about the software development process. About how I architect code. I’m starting to build things in ways that work for the agents - and not the humans. That’s probably the right call - the direction of travel seems pretty clear at this point. The balance is shifting fast.

I also suspect I’m only just beginning to scratch the surface of how software development will change in the coming months and years.

Thirty years of figuring out how to simplify merging. Waiting for Easter, checking in at weird times, bribing folk with biscuits not to check-in before me. In some ways what I’m doing today is nothing new. Except I’m no longer working around the humans. And biscuits no longer work as a bribe.

Interesting, definitely agree that merges are a pain and this is an important problem. I've seen problems before though with having too many codebases, and then having a huge amount of pain bumping versions between then all to pick up security fixes. (Admittedly this was made way worse by a policy that each PR had to be reviewed and approved by a real person even if it was just bumping a dependency on another internal repo). But there were some real pain points.

Each repo needs its own test suite and CI. Bumping versions through multiple layers meant that it took ages to release anything.

Suddenly instead of worrying about merge conflicts you are worrying about versioning all over the place. I've found that there is plenty that can go wrong there too.

To get end to end features out changes have to be made across multiple codebases, probably by different people/agents, so coordination becomes harder.

Are these problems solved in the agent world? Or is it just a case of finding a happy medium?